Unified Access to Multiple Providers

Route requests to OpenAI, Anthropic, Gemini, xAI, Ollama, OpenRouter, and ElevenLabs through a single gateway. Drop-in replacement for OpenAI SDKs with virtual keys, cost tracking, and full observability.

Build, deploy, and observe AI agents in minutes.

No-code multi-agent orchestrator

One API for every LLM provider

Production-ready agents in Go

Build production-grade AI agents entirely through the UI. Configure models, tools, MCP servers, and knowledge bases - then interact with it from Slack and Telegram. Full versioning, durable execution, and observability built in.

Configure every aspect of your agent through an intuitive UI. Set system prompts, model parameters, structured output schemas, knowledge bases, skills, and connect MCP servers, function tools, or other agents - with optional human-in-the-loop approval, and more.

Skills bundle prompts, CLI tools, and configuration into portable modules that agents can learn and use only when it is required.

Add documentation and reference materials to your agents through vector-indexed knowledge bases. Upload markdown files, PDFs, and text documents, then search them using semantic similarity for context-aware responses.

Build sophisticated multi-agent systems with two powerful patterns. Use Sub Agents to offload context heavy tasks, or Handoffs to transfer control entirely to specialist agents.

Execute agent code in isolated, secure containerized sandboxes. Each execution runs in its own container with configurable resource limits, network policies, and filesystem isolation.

Deploy agents where your team already works. Connect to Slack and Telegram with a few clicks, or automate with cron jobs. Each channel gets isolated conversation context with optional completion notifications.

Give agents direct access to your codebase. Sync GitHub repositories and keep them up to date automatically. Agents can read, search, and reason over your code for context-aware assistance.

Run agents that survive infrastructure failures. Built on durable execution primitives, agents automatically checkpoint state and resume from failures without losing progress. Supports: Temporal & Restate.

Deep visibility into agent execution. Trace every step, tool call, and decision. Debug complex multi-step workflows with detailed execution traces and performance metrics.

Route requests to OpenAI, Anthropic, Gemini, xAI, Ollama, OpenRouter, and ElevenLabs through a single gateway. Drop-in replacement for OpenAI SDKs with virtual keys, cost tracking, and full observability.

Never expose provider API keys again. Generate virtual keys with fine-grained access control per provider, model, and rate limit. Distribute to teams or customers with one-click revocation.

from openai import OpenAI

# Use your HasteKit virtual key

client = OpenAI(

base_url="http://gateway.example.com/api/gateway/openai",

api_key="sk-hk-abc123...", # Virtual key

)

# Works exactly like OpenAI SDK

response = client.responses.create(

model="gpt-4.1-mini",

input="Hello!",

)Distribute load across multiple provider API keys to maximize throughput and avoid rate limits. Configure weighted distribution, automatic failover, and key rotation.

Know exactly what you're spending. Real-time dashboards show requests, tokens, and costs broken down by provider, model, and project. Full request tracing with OpenTelemetry integration.

The only Go SDK with unified Responses API across all major providers, MCP support, agent handoffs, and durable execution via Temporal and Restate. Ship reliable agents in pure Go.

go get -u github.com/hastekit/hastekit-sdk-goSwitch between LLM providers with a single line change. The SDK provides a unified interface across OpenAI, Anthropic, Gemini, xAI, and Ollama - same code, any provider.

// Switch providers by changing the model string

resp, _ := client.NewResponses(ctx, &responses.Request{

Model: "OpenAI/gpt-4o-mini", // OpenAI

// Model: "Anthropic/claude-sonnet-4-5" // Anthropic

// Model: "Gemini/gemini-2.5-flash" // Gemini

Input: responses.InputUnion{

OfString: utils.Ptr("Hello!"),

},

})Equip your agents with powerful capabilities. Connect to MCP servers, define custom function tools, or compose agents together by using one agent as a tool for another.

// Connect to MCP server

mcpClient, _ := mcpclient.NewSSEClient(ctx,

"http://localhost:9001/sse",

)

// Create agent with MCP + custom tools

agent := client.NewAgent(&hastekit.AgentOptions{

Name: "Assistant",

LLM: model,

McpServers: []agents.MCPToolset{mcpClient},

Tools: []agents.Tool{NewWeatherTool()},

})Built-in conversation management that scales. Automatically track conversation history, summarize long conversations to stay within context limits, and persist state across sessions.

// Create conversation manager

history := client.NewConversationManager()

agent := client.NewAgent(&hastekit.AgentOptions{

Name: "Assistant",

LLM: model,

History: history, // Enable history

})

// Conversations linked by namespace + PreviousMessageID

out, _ := agent.Execute(ctx, &agents.AgentInput{

Namespace: "user-123",

PreviousMessageID: lastRunID,

Messages: []responses.InputMessageUnion{

responses.UserMessage("What's my name?"),

},

})Build agents that survive failures. Integrate with Temporal or Restate for crash recovery, automatic retries, and long-running workflows that can span hours or even days.

// Initialize SDK with Restate

client, _ := hastekit.New(&hastekit.ClientOptions{

ProviderConfigs: providerConfigs,

RestateConfig: hastekit.RestateConfig{

Endpoint: "http://localhost:8081",

},

})

// Create durable agent - survives crashes

agent := client.NewRestateAgent(&hastekit.AgentOptions{

Name: "DurableAgent",

LLM: model,

})Keep humans in control of critical decisions. Pause agent execution for approval, collect additional input, or implement review workflows before sensitive operations.

// Create tool that requires human approval

type DeleteTool struct {

*agents.BaseTool

}

func NewDeleteTool() *DeleteTool {

return &DeleteTool{

BaseTool: &agents.BaseTool{

RequiresApproval: true, // Requires approval

ToolUnion: responses.ToolUnion{...},

},

}

}Unified access to every major LLM provider

Comprehensive support across all major LLM providers and capabilities.

| Provider | Text | Image Gen | Image Proc | Tool Calls | Reasoning | Streaming | Structured Output | Embeddings | Speech |

|---|---|---|---|---|---|---|---|---|---|

| OpenAI | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ |

| Anthropic | ✓ | - | ✓ | ✓ | ✓ | ✓ | ✓ | - | - |

| Gemini | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ |

| xAI | ✓ | - | - | ✓ | ✓ | ✓ | ✓ | - | - |

| Ollama | ✓ | - | - | ✓ | ✓ | ✓ | ✓ | - | - |

Start with a single command. No complex setup required.

Run the HasteKit gateway with a single command. Includes both LLM gateway and Agent gateway.

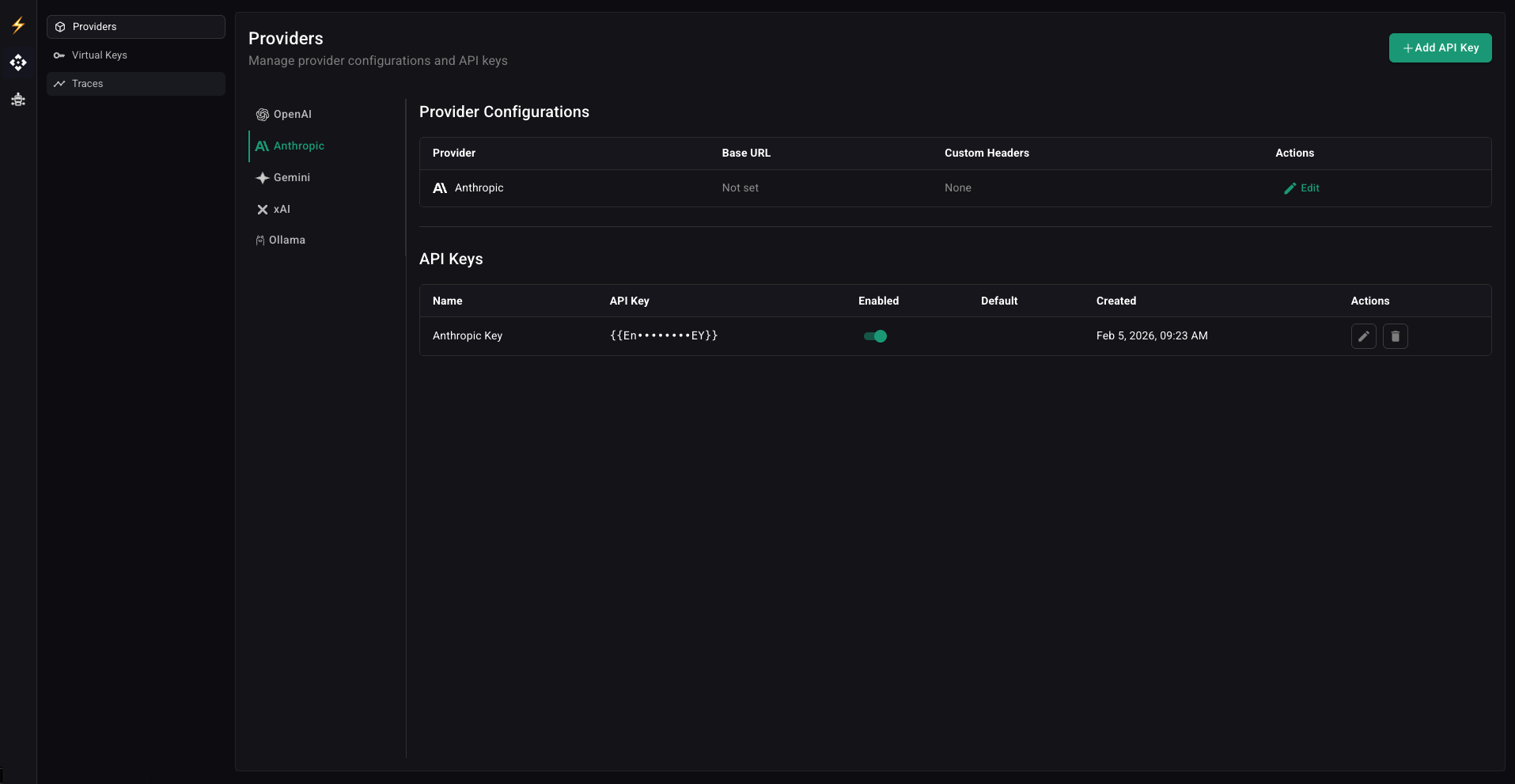

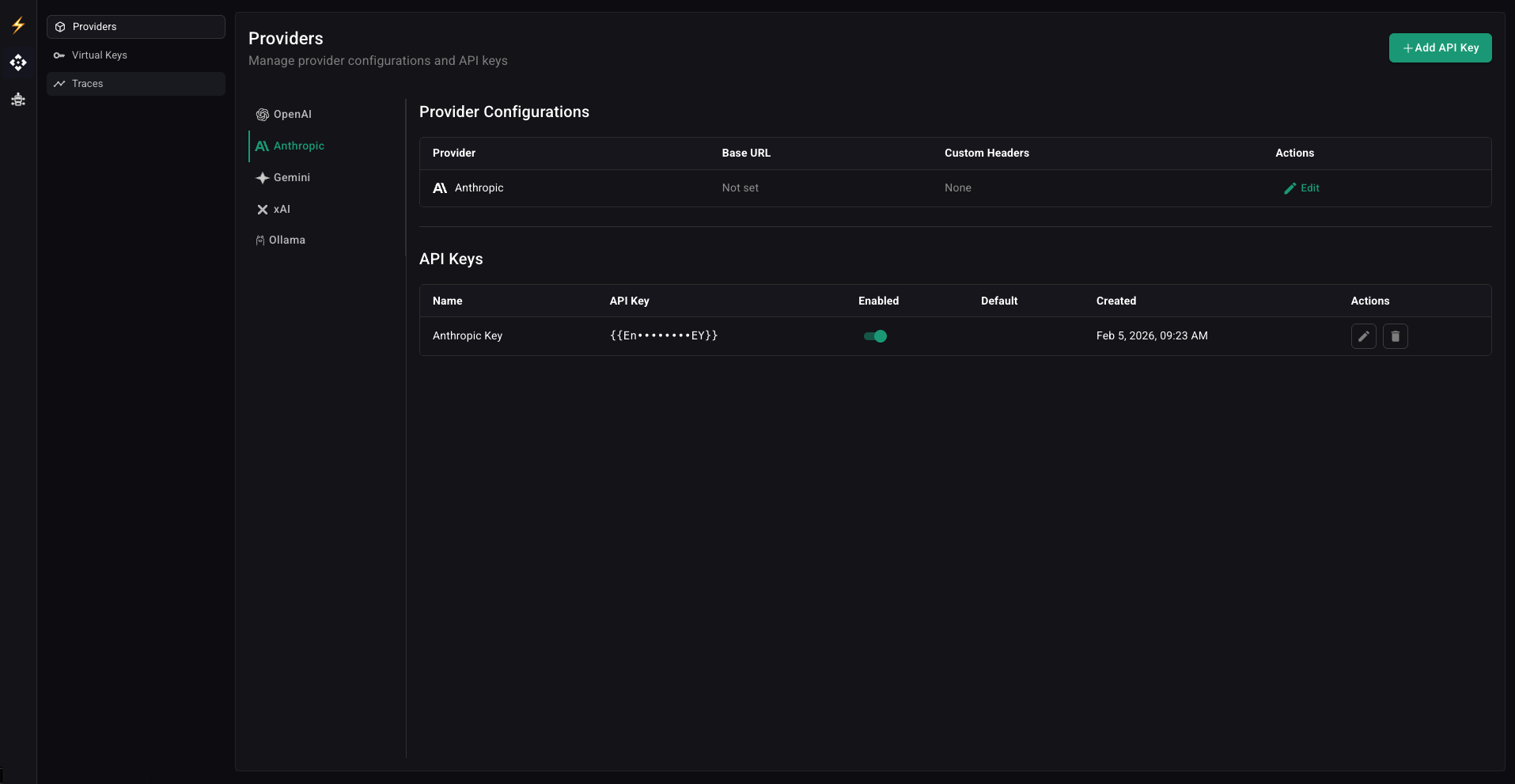

npx @hastekit/ai-gatewayAdd your LLM provider API keys through the dashboard at localhost:3000.

Generate a virtual key with your desired permissions, rate limits, and model access.

sk-hk-abc123def456...Use your existing SDK with the gateway URL, or build agents with the no-code builder.

from openai import OpenAI

client = OpenAI(

base_url="http://localhost:6060/api/gateway/openai",

api_key="your-virtual-key",

)Start building production-grade AI agents today. Try the Gateway or explore the SDK.