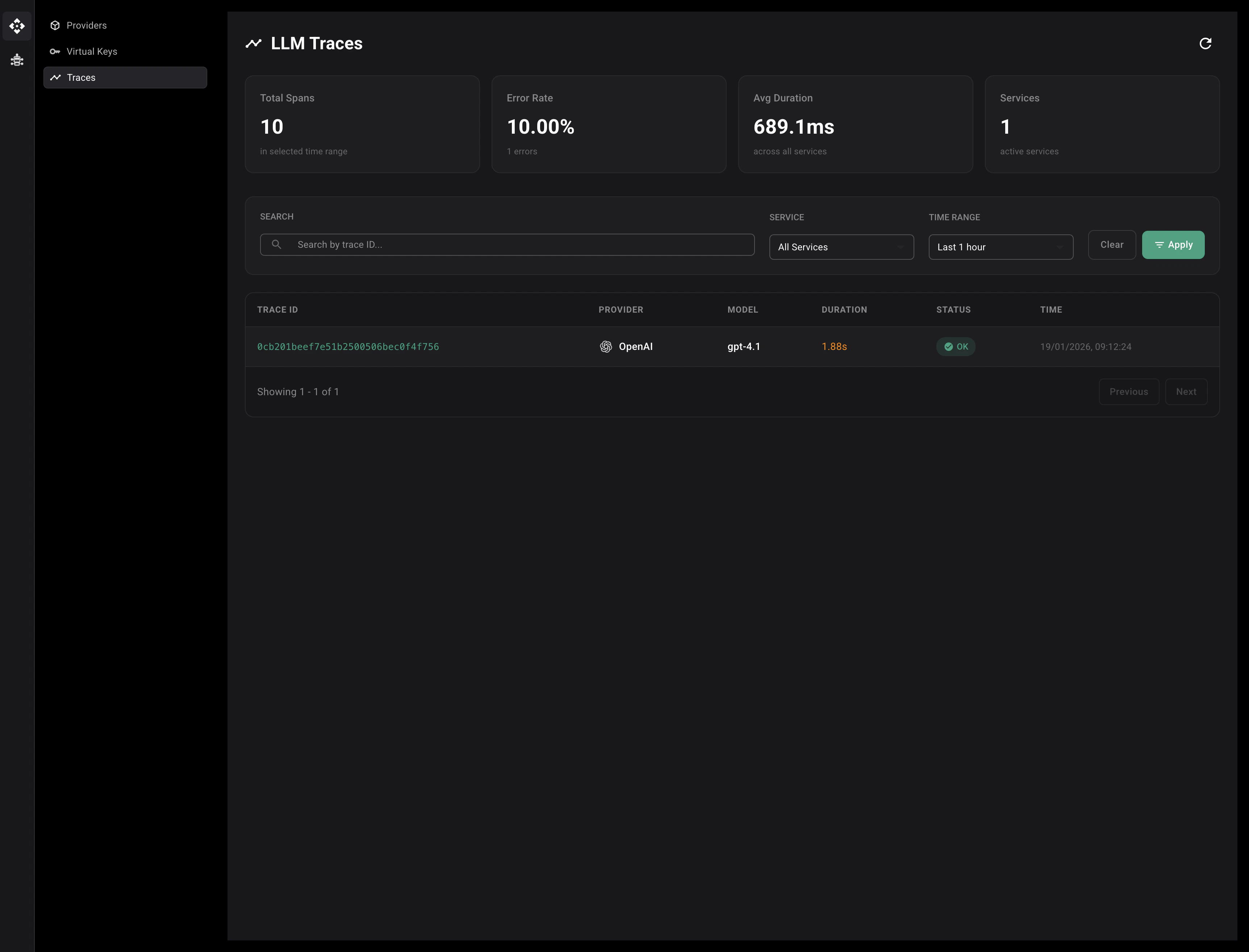

The Tracing page provides comprehensive observability for all LLM requests flowing through the HasteKit LLM Gateway. It allows you to monitor request performance, debug issues, and analyze usage patterns across different providers, models, and virtual keys.Documentation Index

Fetch the complete documentation index at: https://hastekit.ai/docs/llms.txt

Use this file to discover all available pages before exploring further.

Overview

Tracing in the HasteKit LLM Gateway uses OpenTelemetry to collect and display detailed information about every LLM request. Each request generates a trace that includes:- Trace ID: Unique identifier for the request

- Provider: Which LLM provider was used (OpenAI, Anthropic, Gemini, etc.)

- Model: Specific model that processed the request

- Duration: How long the request took to complete

- Status: Whether the request succeeded or failed

- Timestamp: When the request occurred

Searching and Filtering Traces

The Traces page provides several ways to find specific traces:Search by Trace ID

- Enter a trace ID in the Search field

- The table will filter to show only matching traces

Filter by Service

- Click the Service dropdown

- Select a specific service, or choose “All Services” to see all traces

Filter by Time Range

- Click the Time Range dropdown

- Select a predefined time range:

- Last 1 hour

- Last 6 hours

- Last 24 hours

- Last 7 days

- Last 30 days

- Custom range

Applying Filters

- Set your desired filters (Service, Time Range)

- Optionally enter a trace ID in the search field

- Click the Apply button to apply all filters

- Click Clear to reset all filters to defaults